The goal of SRE is to accelerate product development teams and keep services running in reliable and continuous way.

This article is a collection practical notes on explaining what is SRE, what kind of work SREs does and what type of processes they develop. The practices are based on Google SRE workbook.

This is a long article and If you will make it to the end I applaud to you!

But, don’t stop here, go and read both Google SRE books which mentioned in References. Then learn about Prometheus and ELK stacks – they are open source tools that help to implement SRE practices.

That should keep your busy for at least a year. I wish you best of luck!

SRE practices include

- SLOs and SLIs

- Monitoring

- Alerting

- Toil reduction

- Simplicity

SLO and SLI

SLO is Service Level Objective is a goal that service provider wants to reach.

Practicality of SLO: SLOs are tools to help determine what engineering work to prioritize. SLOs define the concept of error budget.

Talking about error budget, lets see next how error budget have to be approached.

Error budget approach

- There are SLOs which all stakeholder in org. approved

- It is possible to meet SLOs needs under normal conditions

- Org. is committed to using error budget for decision making and prioritizing

This are essentials steps to have error budget approach in place, your work is to go as close as possible to fulfill them.

What to measure using SLIs

SLIs is the ration between two numbers: the good and the total:

- Number of successful HTTP request / total HTTP requests

- Number of consumed jobs in a queue / total number of jobs in a queue

SLI divided on specification and implementation. For example:

- Specification: ration of requests loaded in < 100 ms

- Implementation based on: a) server logs b) Javascript on client

SLO and SLI in practice

The strategy to implement SLO, SLI in your company is to start small. Consider following aspects when working on your first SLO.

- Choose one application for which you want to define SLOs

- Decide on few keys SLIs specs that matter to your service and users

- Consider common ways and tasks through which your users interact with service

- Draw a high-level architecture diagram of your system

- Show key components. The requests flow. The data flow

The result is narrow and focused prove of concept that would help to make benefits of SLO, SLI concise and clear.

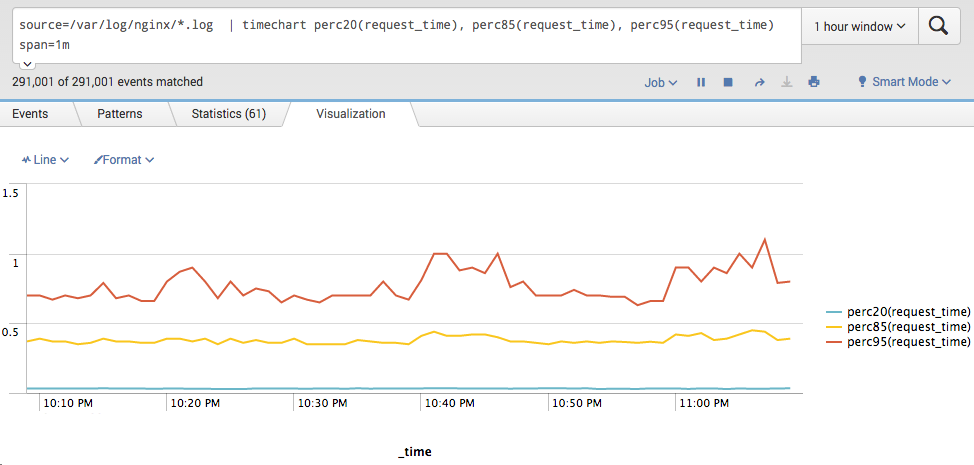

Type of SLIs

SLI is Service Level Indicator is a measurement the service provider uses for the SLO goal.

There are several types of measurement you might choose from depend on type of your service:

- Availability – The proportion of request which result in successful state

- Latency – The proportion of request below some time threshold

- Freshness – The proportion of data transferred to some time threshold. Replication or Data pipeline

- Correctness – The proportion of input that produce correct output

- Durability – The proportion of records written that can be successfully read

Summary of first actions toward SLOs and SLIs

- Setup white box monitoring: prometheus, datadog, newrelic

- Develop key SLOs and SLOs response process like incident management

- Error budget enforcement decisions. Written error budget policy. Priority to reliability when error budget is spent.

- Continuous improvement of SLOs target. Monthly SLOs review.

- Count outages and measure user happiness

- Create a training program to train developers on SLOs and other reliability concepts

- Create SLOs dashboard

This is essential starting points to implement SLOs in your company. It will bring more confidence and better decision making in your services.

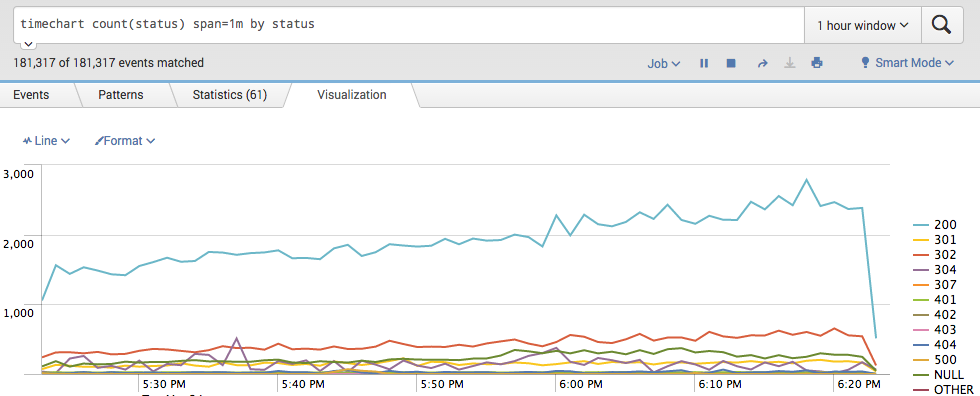

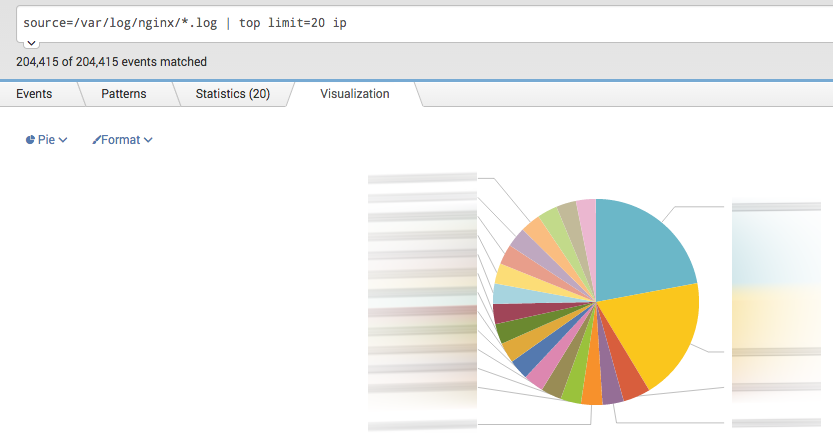

Monitoring

How SRE define monitoring

- Alert on condition that require attention

- Investigate and diagnose issues

- Display information about the system visually

- Gain insight into system health and resource usage for long-term planning

- Compare the behavior of the system before and after a change, or between two control groups

Features of monitoring you have to know and tune

- Speed. Freshness of data.

- Data retention and calculations

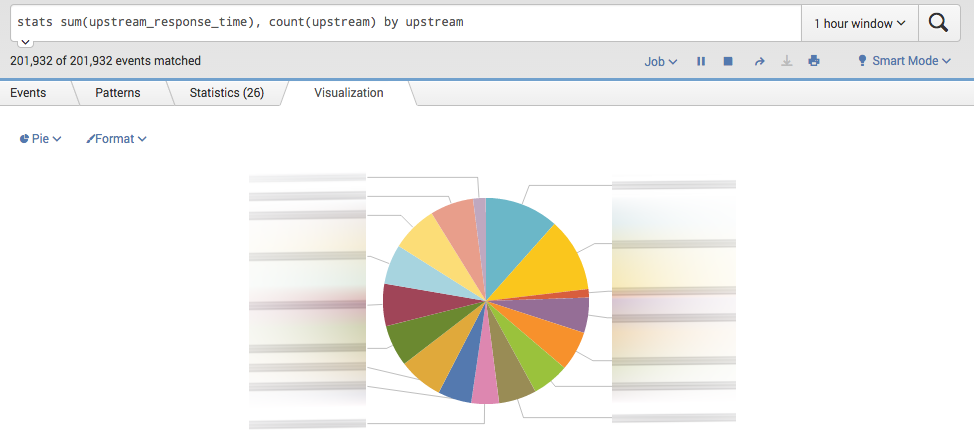

- Interfaces: graphs, tables, charts. High level or low level.

- Alerts: multiple categories, notifications flow, suppress functionality.

Source of monitoring

- Metrics

- Logs

That is high level overview. Details depend on your tools and platform. For the ones who are only starting I recommend to look for open source projects such as Prometheus, Grafana and ElasticSearch(ELK) monitoring stack.

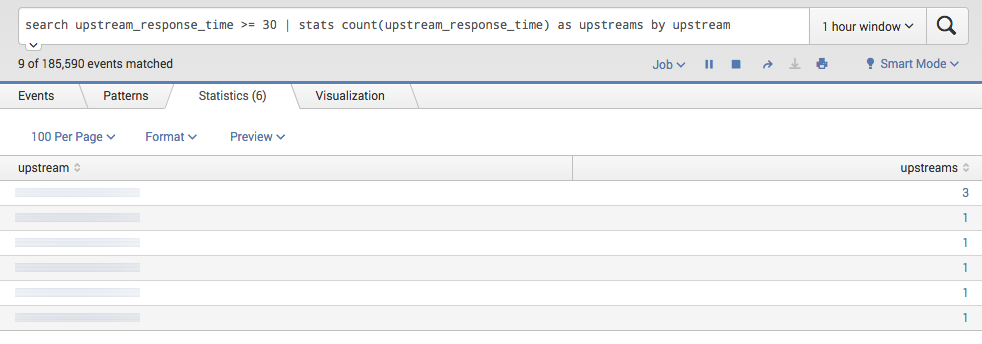

Alerting

Alerting considerations

- Precision – The proportion of events detected that were significant

- Recall – The proportion of significant events detected

- Detection time – How long it takes to send notification in various conditions

- Reset time – How long alerts fire after an issue is resolved

Ways to alerts

There are several strategies on how alerts could be setup. Recommendation is to combine several strategies to enhance your alerts quality from different directions.

First and simple one:

- Target error rate ≥ SLO threshold.

- Example: For 10 minutes window error rate exceeds the SLO

- Upsides: Fast recall time

- Downsides: Precision is low

- Increased alert windows.

- Example: if an event consumes 5% of the 30-day error budget – a 36-hour window.

- Upsides: good detection time

- Downside: poor reset time

- Increment alert duration. For how long alert should be triggered to be significant.

- Upsides: Alerts can be higher precision.

- Downside: poor recall and poor detection time

- Alert on burn rate. How fast, relative to SLO, the service consume error budget.

- Example: 5% error budget over 1 hour period.

- Upside: Good precision, short time window, good detection time.

- Downside: low recall, long reset time

- Multiple burn rate alerts. Depend on burn rate determine severity of alert which lead to page notification or a ticket

- Upsides: good recall, good precision

- Downsides: More parameters to manage, long reset time

- Multi window, multi burn alerts.

- Upsides: Flexible alert framework, good precision, good recall

- Downside: even more harder to manage, lots of parameters to specify

The same as for Monitoring an open source tool to be alert on metrics is combination of Prometheus and Alertmanager. As for logs look for Kibana project which has Alerting on log patterns.

Toil reduction

Definition: toils is repetitive, predictable, constant stream of tasks related to maintaining a service

What is toil

- Manual. When the tmp directory on a web server reach 95% utilization, you need to login and find a space to clean up

- Repetitive. A full tmp director is unlikely to be a one time event

- Automatable. If the instructions are well defined then it’s better to automate the problem detection and remediation

- Reactive. When you receive too many alerts of “disks full”, they distract more than help. So, potentially high-severity alerts could be missed

- Lacks enduring value. The satisfaction of completed tasks is short term, because it is to prevent the issue in the future

- Grow at least as fast as it’s source. Growing popularity of the service will require more infrastructure and more toil work

Potential benefits of toil automation

- Engineering work might reduce toil in the future

- Increased team morale

- Less context switching for interrupts, which raises team productivity

- Increased process clarity and standardization

- Enhanced technical skills

- Reduced training time

- Fewer outages attributable to human errors

- Improved security

- Shorter response times for user requests

How to measure toil

- Identify it.

- Measure the amount of human effort applied to this toil

- Track these measurements before, during and after toil reduction efforts

Toil categorization

- Business processes. Most common source of toil.

- Production interrupts. The key tasks to keep system running.

- Product releases. Depending on the tooling and release size they could generate toil.(release requests, rollbacks, hot fixes and repetitive manual configuration changes)

- Migrations. Large scale migration or even small database structure change likely done manually as one time effort. Such thinking is a mistake, because this work is repetitive.

- Cost engineering and capacity planning. Ensure a cost-effective baseline. Prepare for critical high traffic events.

- Troubleshooting

Toil management strategies in practices

Basics:

- Identify and measure

- Engineer toil out of the system

- Reject the toil

- Use SLO to reduce toil

Organizational:

- Start with human-backed interfaces. For complex business problems start with partially automated approach.

- Get support from management and colleagues. Toil reduction is worthwhile goal.

- Promote toil reduction as a feature. Create strong business case for toil reduction.

- Start small and then improve

Standardization and automation:

- Increase uniformity. Lean to standard tools, equipment and processes.

- Access risk within automation. Automation with admin-level privileges should have safety mechanism which checks automation actions against the system. It will prevent outages caused by bugs in automation tools.

- Automate toil response. Think how to approach toil automation. It shouldn’t eliminate human understanding of what’s going on.

- Use open source and third-party tools.

In general:

- Use feedback to improve. Seek for feedback from users who interact with your tools, workflows and automation.

Simplicity

Measure complexity

- Training time. How long it take for newcomer engineer to get on full speed.

- Explanation time. The time it takes to provide a view on system internals.

- Administrative diversity. How many ways are there to configure similar settings

- Diversity of deployed configuration

- Age. How old is the system

SRE work on simplicity

- SRE understand the systems end to prevent and fix source of complexity

- SRE should be involved in design, system architecture, configuration, deployment processes, or elsewhere.

- SRE leadership empower SRE teams to push for simplicity, and to explicitly reward these efforts.

Conclusions

- SRE practices require significant amount of time and skilled SRE people to implement right

- A lot of tools are involved in day to day SRE work

- SRE processes is one of a key to success of tech company